New Method Keeps Chatbots Sharp in Long Conversations

-

When chatbots like ChatGPT have long conversations, their performance can deteriorate as their memory cache fills up. Researchers found keeping the first tokens in the cache prevents this.

-

The first cache tokens become "attention sinks" that maintain the model dynamics. Keeping 4 attention sinks enables optimal performance.

-

The new StreamingLLM method runs over 22 times faster than recomputation methods for long conversations.

-

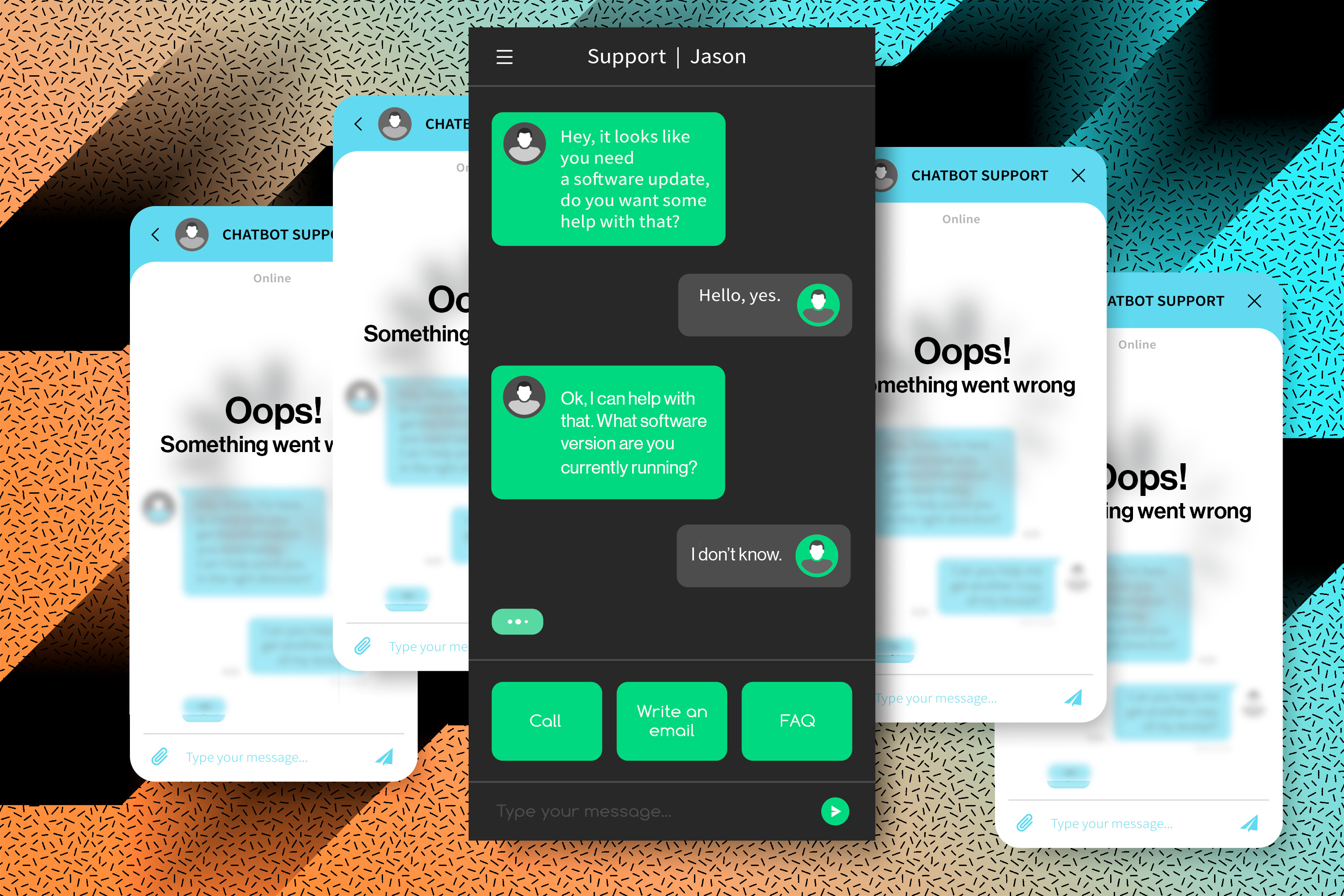

StreamingLLM could enable chatbots to have all-day conversations without needing constant reboots.

-

The method could allow using chatbots for new applications like copywriting, editing, or coding.