Posted 2/26/2024, 12:06:12 PM

AMD Preparing Enhanced MI300 AI Chips to Rival Nvidia's Latest Offerings

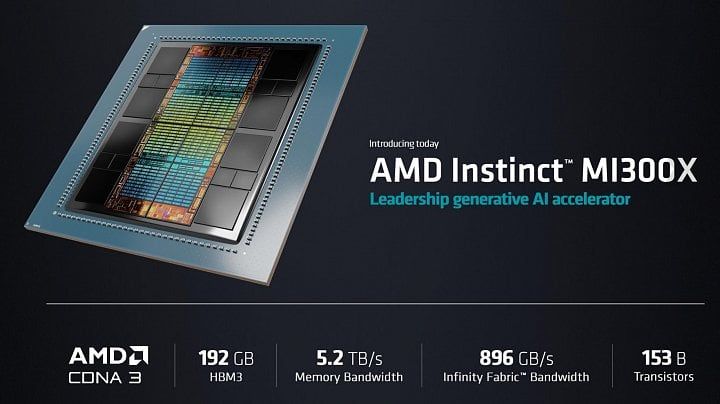

- AMD's Instinct MI300 AI accelerators may soon get new versions with more HBM3E memory

- Using HBM3E instead of HBM3 would significantly boost memory bandwidth

- This comes as AMD competes with Nvidia's new 141GB HBM3E-powered H200 GPUs

- AMD claims its current 192GB MI300X outpaces Nvidia's 80GB H100 used by hyperscalers

- AMD says to expect a "multiyear roadmap" of competitive back-and-forth with Nvidia in AI accelerators