Artists Subtly Poison Images to Sabotage AI Systems Scraping Web Data

-

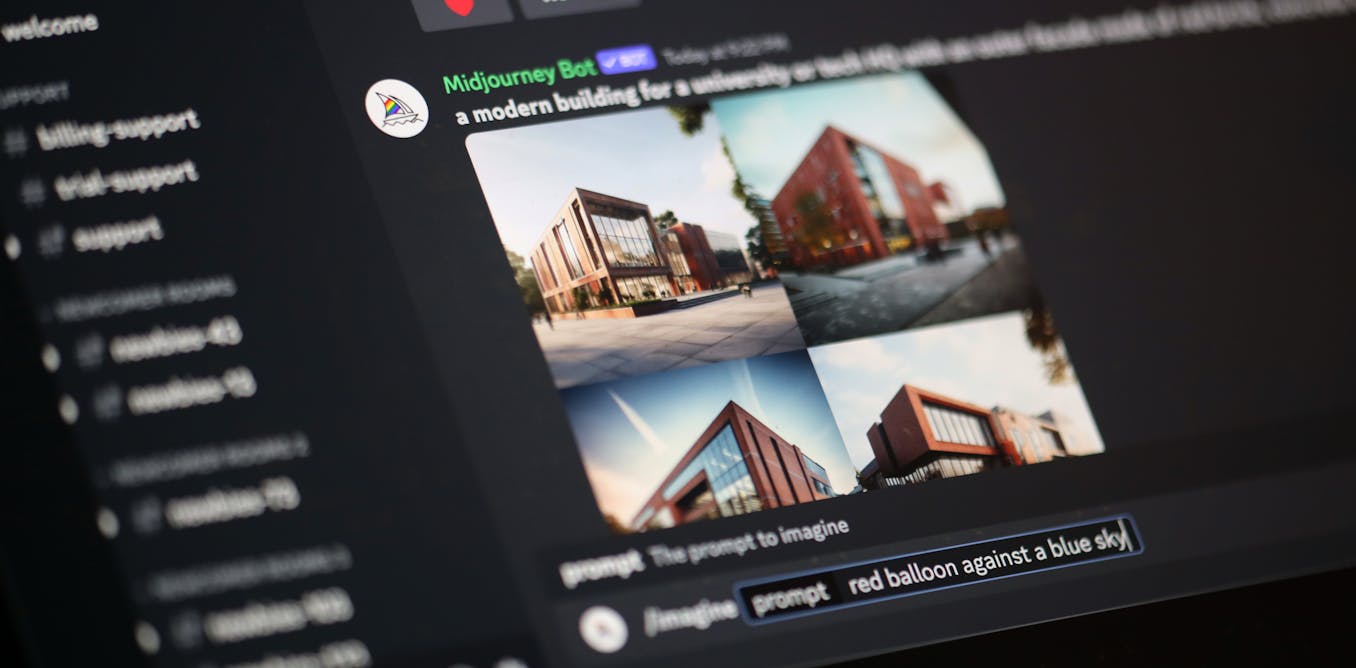

Artists are subtly altering images, a process called "data poisoning", to sabotage AI image generators that have scraped images without permission. This causes the AIs to produce unpredictable and incorrect images.

-

The "Nightshade" tool poisons images by tweaking pixels so images appear normal to humans but wreck havoc for computer vision systems.

-

Poisoned images in an AI's training data can cause it to learn illogical associations, like linking balloons to eggs, or rendering six-legged dogs.

-

Proposed solutions include better scrutinizing data sources, using ensemble modeling to detect outliers, curated test datasets to examine accuracy, and rethinking assumptions that all online data is fair game.

-

Data poisoning relates to longstanding adversarial approaches that aim to circumvent systems like facial recognition, raising larger governance questions around technology.