OpenAI Details Work on Controlling Potential Superhuman AI to Ensure Safety

-

OpenAI published a paper detailing work by Ilya Sutskever's team on controlling a potential superhuman AI system to ensure it remains safe.

-

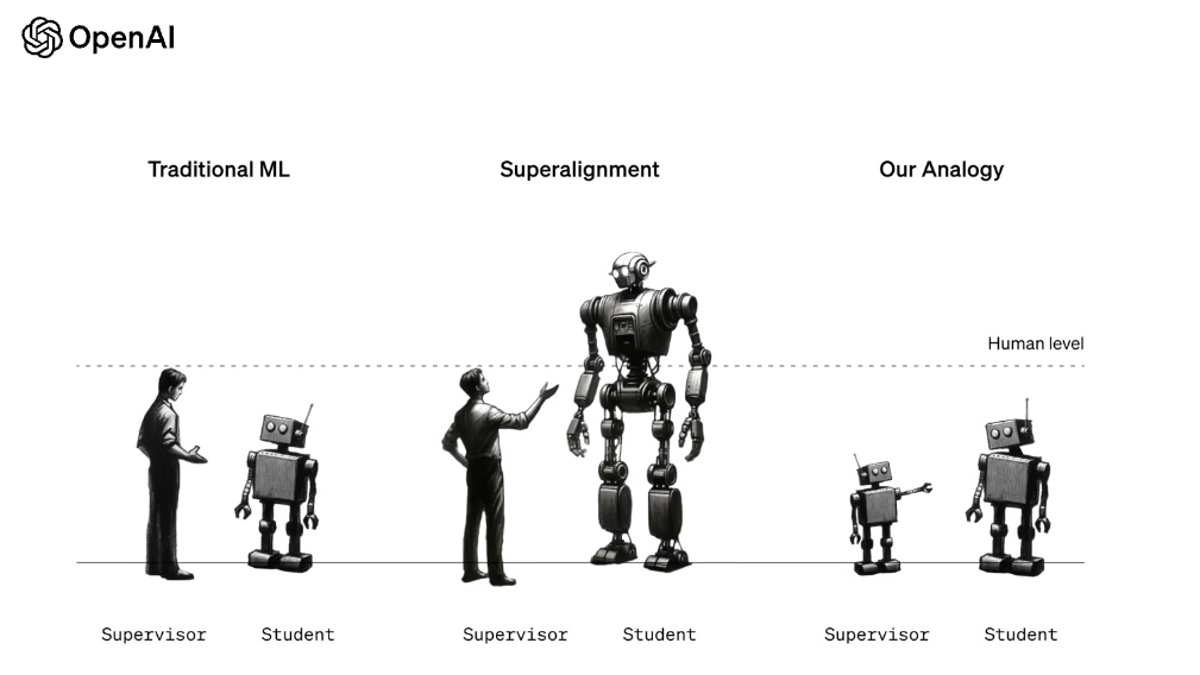

The proposed solution involves using smaller AI models to train and "align" larger, more capable AI models that could become superhuman.

-

This is the first paper from Sutskever's "Superalignment" team at OpenAI formed in July 2022.

-

Sutskever's current role and status at OpenAI remains unclear after leadership changes and disputes in late 2022.

-

The paper confirms OpenAI is actively developing tools to control an AGI system, but does not confirm the existence of an AGI.