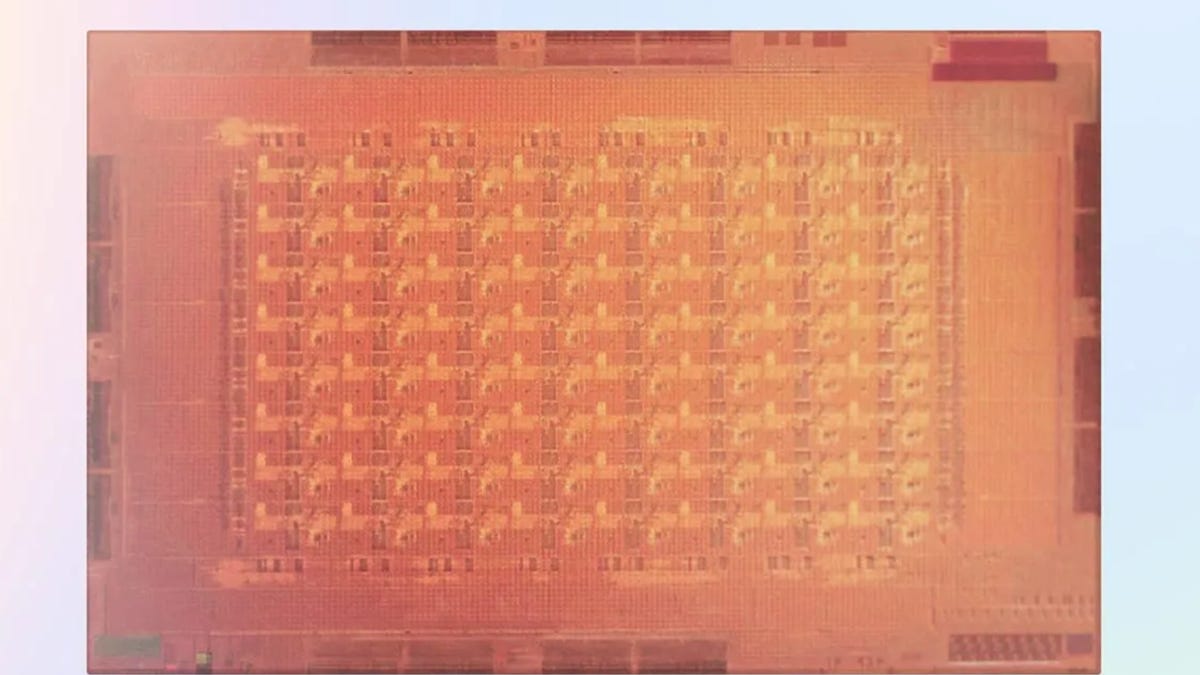

Meta Unveils Next-Gen AI Chip With 3X Speed and Memory

- Meta unveils second-generation AI training and inference chip, MTIA v2

- MTIA v2 has 3x the on-chip memory of v1 to triple performance on AI tasks

- The chip is over 3x faster than v1 and 7x faster on sparse computation tasks

- MTIA v2 has 2.4 billion gates and performs 103 million floating-point operations per second

- Meta plans to continue investing in custom hardware like MTIA for AI workloads