Meta Unveils Next-Gen AI Chip, Doubles Performance to Reduce Reliance on Nvidia GPUs

-

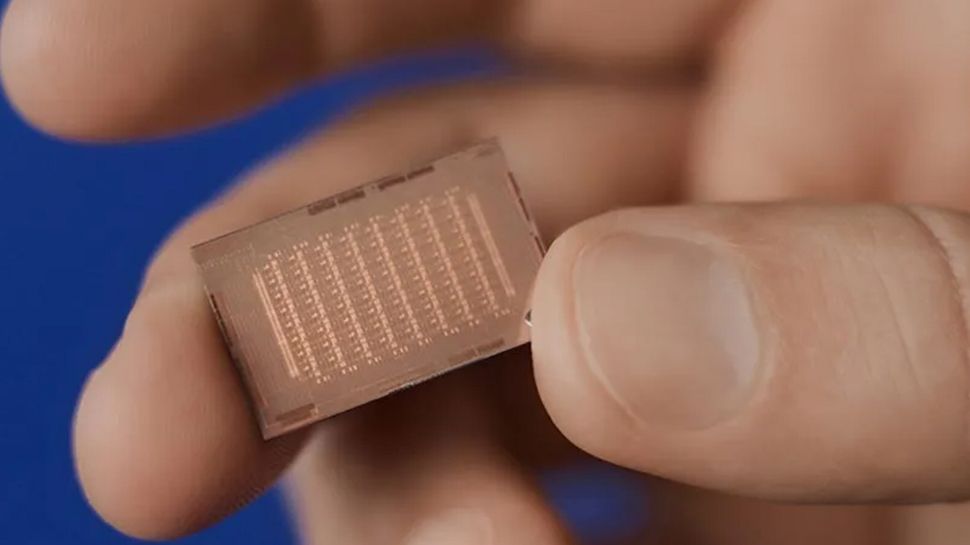

Meta unveiled its second-generation AI inference accelerator, MTIA, which doubles compute and memory bandwidth over the previous version.

-

The new MTIA chip architecture balances compute power, memory bandwidth, and capacity for ranking and recommendation models.

-

Key upgrades include more local storage, on-chip SRAM, and LPDDR5 capacity compared to the first MTIA.

-

Meta co-designed the MTIA software stack to synergize with the new hardware for optimal inference performance.

-

While not drastically reducing reliance on Nvidia GPUs yet, MTIA is another step towards Meta's goal of less dependence on external AI hardware.