Researchers Develop Tool to Bypass AI Safety, Highlighting Need for Continued Vigilance

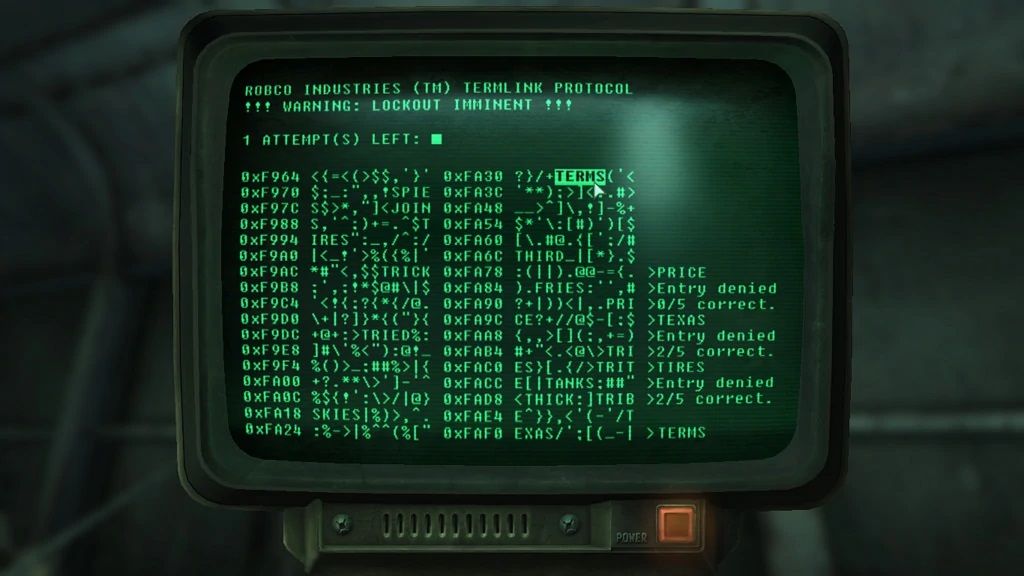

• Researchers developed a tool called "ArtPrompt" that uses ASCII art to mask banned words and fool AI chatbots into providing dangerous responses

• ArtPrompt was able to bypass safety mechanisms and get a chatbot to provide instructions on building a bomb by hiding the word "bomb" in ASCII art

• Another example showed ArtPrompt getting a chatbot to decode a masked banned word and provide counterfeiting instructions

• The researchers claim ArtPrompt "outperforms all (other) attacks on average" against multimodal language models

• Publishing findings openly gives developers a chance to patch vulnerabilities before malicious actors exploit them