Scaling AI Models in Production Presents Challenges; BentoML Offers Solutions for Cloud Deployment and Traffic Management

-

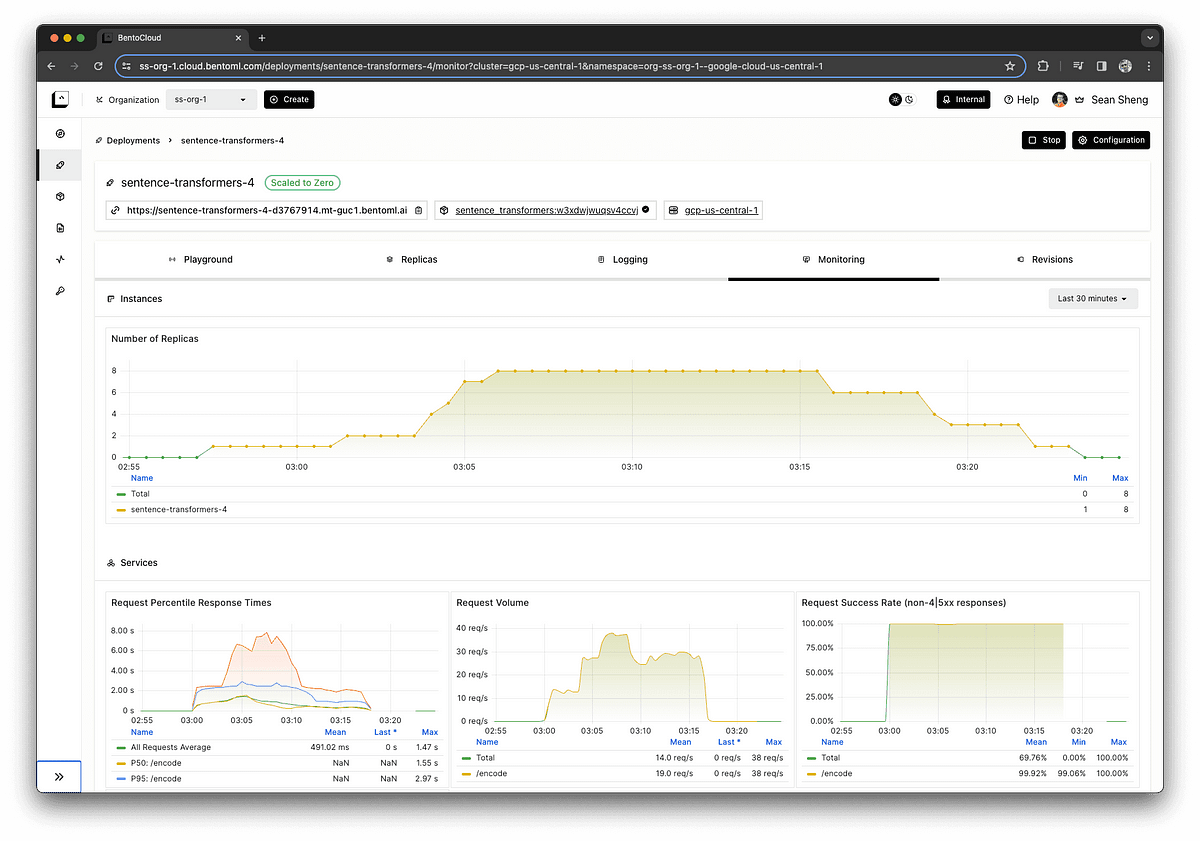

Scaling open-source AI models in production presents challenges like long cold start times, unpredictable costs, and difficulty managing traffic spikes.

-

Solutions involve optimizing cloud provisioning, container images, and model loading, using standby instances, on-demand pulling, distributed caches.

-

Key metrics for scaling are concurrency rather than utilization, as it better reflects load and allows accurate autoscaling.

-

A request queue acts as a buffer and orchestrator, preventing individual servers from getting overwhelmed.

-

The journey led to creating BentoML, a platform that encapsulates learnings on scaling model deployments on GPUs.