Watermarks Fail to Stem Flood of AI-Generated Misinformation

-

Watermarking technology promoted by tech companies as a way to combat AI misinformation is easily bypassed and removed.

-

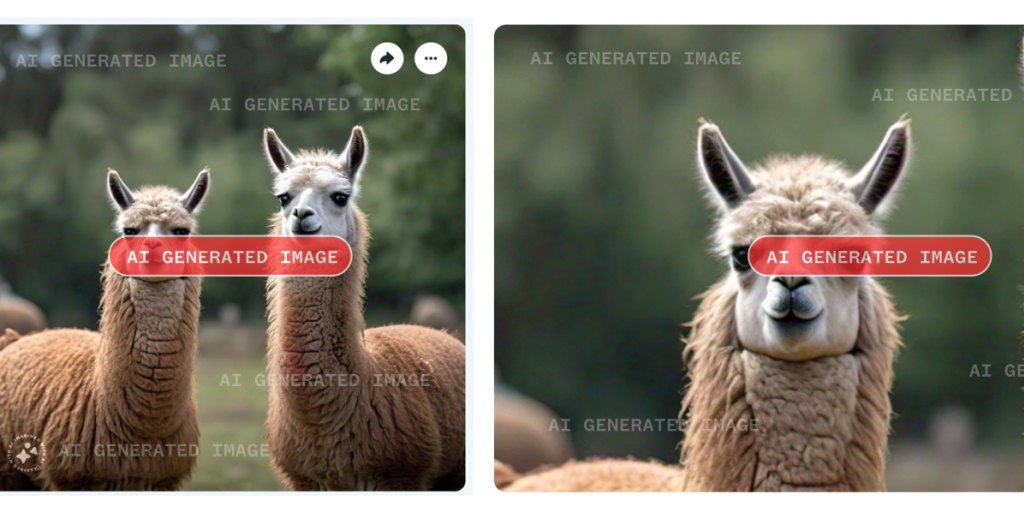

Both invisible metadata watermarks and visible labels on AI-generated images can be stripped through simple methods like cropping and screenshotting.

-

Major platforms like Meta have not strictly enforced labeling of AI content, and labels can be cropped out even by high profile users.

-

Sophisticated watermarks meant to withstand manipulation can still be removed by those with skill and motivation.

-

Deepfakes used for scams, nonconsensual fake porn, and political disinformation show the limits of relying on watermarks to solve AI harms.