AI executives may be exaggerating the dangers of artificial intelligence in order to advance their own interests, according to an analysis of responses to proposed AI regulations.

The U.S. is falling behind in regulating artificial intelligence (AI), while Europe has passed the world's first comprehensive AI law; President Joe Biden recently met with industry leaders to discuss the need for AI regulation and companies pledged to develop safeguards for AI-generated content and prioritize user privacy.

Microsoft's report on governing AI in India provides five policy suggestions while emphasizing the importance of ethical AI, human control over AI systems, and the need for multilateral frameworks to ensure responsible AI development and deployment worldwide.

The rapid development of artificial intelligence poses similar risks to those seen with social media, with concerns about disinformation, misuse, and impact on the job market, according to Microsoft President Brad Smith. Smith emphasized the need for caution and guardrails to ensure the responsible development of AI.

Salesforce has released an AI Acceptable Use Policy that outlines the restrictions on the use of its generative AI products, including prohibiting their use for weapons development, adult content, profiling based on protected characteristics, medical or legal advice, and more. The policy emphasizes the need for responsible innovation and sets clear ethical guidelines for the use of AI.

Microsoft President Brad Smith advocates for the need of national and international regulations for Artificial Intelligence (AI), emphasizing the importance of safeguards and laws to keep pace with the rapid advancement of AI technology. He believes that AI can bring significant benefits to India and the world, but also emphasizes the responsibility that comes with it. Smith praises India's data protection legislation and digital public infrastructure, stating that India has become one of the most important countries for Microsoft. He also highlights the necessity of global guardrails on AI and the need to prioritize safety and building safeguards.

The increasing investment in generative AI and its disruptive impact on various industries has brought the need for regulation to the forefront, with technologists and regulators recognizing the importance of ensuring safer technological applications, but differing on the scope of regulation needed. However, it is argued that existing frameworks and standards, similar to those applied to the internet, can be adapted to regulate AI and protect consumer interests without stifling innovation.

Microsoft is poised to become the leading operating system for AI, as it takes advantage of the expanding AI market and leverages its existing ecosystem and user base, according to Oppenheimer analyst Timothy Horan.

Despite the acknowledgement of its importance, only 6% of business leaders have established clear ethical guidelines for the use of artificial intelligence (AI), emphasizing the need for technology professionals to step up and take leadership in the safe and ethical development of AI initiatives.

Artificial intelligence expert Michael Wooldridge is not worried about the growth of AI, but is concerned about the potential for AI to become a controlling and invasive boss that monitors employees' every move. He emphasizes the immediate and concrete existential concerns in the world, such as the escalation of conflict in Ukraine, as more important things to worry about.

Artificial intelligence (AI) tools can put human rights at risk, as highlighted by researchers from Amnesty International on the Me, Myself, and AI podcast, who discuss scenarios in which AI is used to track activists and make automated decisions that can lead to discrimination and inequality, emphasizing the need for human intervention and changes in public policy to address these issues.

The UK government has been urged to introduce new legislation to regulate artificial intelligence (AI) in order to keep up with the European Union (EU) and the United States, as the EU advances with the AI Act and US policymakers publish frameworks for AI regulations. The government's current regulatory approach risks lagging behind the fast pace of AI development, according to a report by the science, innovation, and technology committee. The report highlights 12 governance challenges, including bias in AI systems and the production of deepfake material, that need to be addressed in order to guide the upcoming global AI safety summit at Bletchley Park.

The authors propose a framework for assessing the potential harm caused by AI systems in order to address concerns about "Killer AI" and ensure responsible integration into society.

The author suggests that developing safety standards for artificial intelligence (AI) is crucial, drawing upon his experience in ensuring safety measures for nuclear weapon systems and highlighting the need for a manageable group to define these standards.

The use of AI in the entertainment industry, such as body scans and generative AI systems, raises concerns about workers' rights, intellectual property, and the potential for broader use of AI in other industries, infringing on human connection and privacy.

The digital transformation driven by artificial intelligence (AI) and machine learning will have a significant impact on various sectors, including healthcare, cybersecurity, and communications, and has the potential to alter how we live and work in the future. However, ethical concerns and responsible oversight are necessary to ensure the positive and balanced development of AI technology.

Former Google executive Mustafa Suleyman warns that artificial intelligence could be used to create more lethal pandemics by giving humans access to dangerous information and allowing for experimentation with synthetic pathogens. He calls for tighter regulation to prevent the misuse of AI.

A survey of 213 computer science professors suggests that a new federal agency should be created in the United States to govern artificial intelligence (AI), while the majority of respondents believe that AI will be capable of performing less than 20% of tasks currently done by humans.

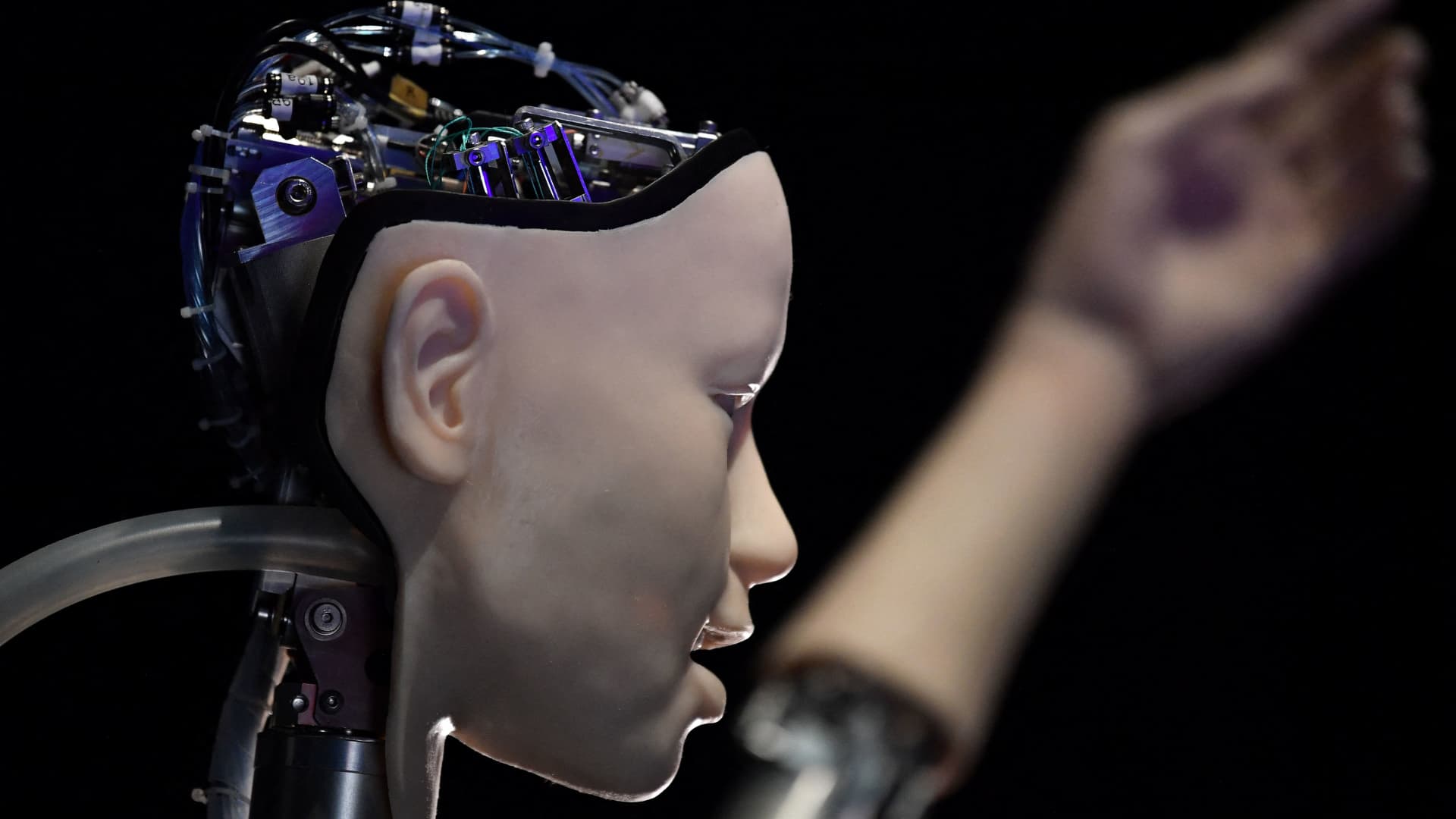

Robots have been causing harm and even killing humans for decades, and as artificial intelligence advances, the potential for harm increases, highlighting the need for regulations to ensure safe innovation and protect society.

The lack of regulation surrounding artificial intelligence in healthcare is a significant threat, according to the World Health Organization's European regional director, who highlights the need for positive regulation to prevent harm while harnessing AI's potential.

The race between great powers to develop superhuman artificial intelligence may lead to catastrophic consequences if safety measures and alignment governance are not prioritized.

Congressman Clay Higgins (R-LA) plans to introduce legislation prohibiting the use of artificial intelligence (AI) by the federal government for law enforcement purposes, in response to the Internal Revenue Service's recently announced AI-driven tax enforcement initiative.

The G20 member nations have pledged to use artificial intelligence (AI) in a responsible manner, addressing concerns such as data protection, biases, human oversight, and ethics, while also planning for the future of cryptocurrencies and central bank digital currencies (CBDCs).

Countries around the world, including Australia, China, the European Union, France, G7 nations, Ireland, Israel, Italy, Japan, Spain, the UK, the UN, and the US, are taking various steps to regulate artificial intelligence (AI) technologies and address concerns related to privacy, security, competition, and governance.

AI has the potential to fundamentally change governments and society, with AI-powered companies and individuals usurping traditional institutions and creating a new world order, warns economist Samuel Hammond. Traditional governments may struggle to regulate AI and keep pace with its advancements, potentially leading to a loss of global power for these governments.

Renowned historian Yuval Noah Harari warns that AI, as an "alien species," poses a significant risk to humanity's existence, as it has the potential to surpass humans in power and intelligence, leading to the end of human dominance and culture. Harari urges caution and calls for measures to regulate and control AI development and deployment.

Eight more companies, including Adobe, IBM, Palantir, Nvidia, and Salesforce, have pledged to voluntarily follow safety, security, and trust standards for artificial intelligence (AI) technology, joining the initiative led by Amazon, Google, Microsoft, and others, as concerns about the impact of AI continue to grow.

California Senator Scott Wiener is introducing a bill to regulate artificial intelligence (AI) in the state, aiming to establish transparency requirements, legal liability, and security measures for advanced AI systems. The bill also proposes setting up a state research cloud called "CalCompute" to support AI development outside of big industry.

Microsoft President Brad Smith supports the idea of federal licenses and a new regulatory agency for powerful AI platforms, in contrast to tech giants like Google, who prefer voluntary guidelines with limited penalties.

Artificial Intelligence poses real threats due to its newness and rawness, such as ethical challenges, regulatory and legal challenges, bias and fairness issues, lack of transparency, privacy concerns, safety and security risks, energy consumption, data privacy and ownership, job loss or displacement, explainability problems, and managing hype and expectations.

Tech heavyweights, including Elon Musk, Mark Zuckerberg, and Sundar Pichai, expressed overwhelming consensus for the regulation of artificial intelligence during a closed-door meeting with US lawmakers convened to discuss the potential risks and benefits of AI technology.

Spain has established Europe's first artificial intelligence (AI) policy task force, the Spanish Agency for the Supervision of Artificial Intelligence (AESIA), to determine laws and provide a framework for the development and implementation of AI technology in the country. Many governments are uncertain about how to regulate AI, balancing its potential benefits with fears of abuse and misuse.

Artificial intelligence-run robots have the ability to launch cyber attacks on the UK's National Health Service (NHS) similar in scale to the COVID-19 pandemic, according to cybersecurity expert Ian Hogarth, who emphasized the importance of international collaboration in mitigating the risks posed by AI.

The Subcommittee on Cybersecurity, Information Technology, and Government Innovation discussed the federal government's use of artificial intelligence (AI) and emphasized the need for responsible governance, oversight, and accountability to mitigate risks and protect civil liberties and privacy rights.

Artificial intelligence (AI) requires leadership from business executives and a dedicated and diverse AI team to ensure effective implementation and governance, with roles focusing on ethics, legal, security, and training data quality becoming increasingly important.

Adversaries and criminal groups are exploiting artificial intelligence (AI) technology to carry out malicious activities, according to FBI Director Christopher Wray, who warned that while AI can automate tasks for law-abiding citizens, it also enables the creation of deepfakes and malicious code, posing a threat to US citizens. The FBI is working to identify and track those misusing AI, but is cautious about using it themselves. Other US security agencies, however, are already utilizing AI to combat various threats, while concerns about China's use of AI for misinformation and propaganda are growing.

President Biden has called for the governance of artificial intelligence to ensure it is used as a tool of opportunity and not as a weapon of oppression, emphasizing the need for international collaboration and regulation in this area.

Governments worldwide are grappling with the challenge of regulating artificial intelligence (AI) technologies, as countries like Australia, Britain, China, the European Union, France, G7 nations, Ireland, Israel, Italy, Japan, Spain, the United Nations, and the United States take steps to establish regulations and guidelines for AI usage.

A new poll reveals that 63% of American voters believe regulation should actively prevent the development of superintelligent AI, challenging the assumption that artificial general intelligence (AGI) should exist. The public is increasingly questioning the potential risks and costs associated with AGI, highlighting the need for democratic input and oversight in the development of transformative technologies.

Artificial intelligence (AI) is advancing rapidly, but current AI systems still have limitations and do not pose an immediate threat of taking over the world, although there are real concerns about issues like disinformation and defamation, according to Stuart Russell, a professor of computer science at UC Berkeley. He argues that the alignment problem, or the challenge of programming AI systems with the right goals, is a critical issue that needs to be addressed, and regulation is necessary to mitigate the potential harms of AI technology, such as the creation and distribution of deep fakes and misinformation. The development of artificial general intelligence (AGI), which surpasses human capabilities, would be the most consequential event in human history and could either transform civilization or lead to its downfall.

Artificial intelligence will be a significant disruptor in various aspects of our lives, bringing both positive and negative effects, including increased productivity, job disruptions, and the need for upskilling, according to billionaire investor Ray Dalio.

While many experts are concerned about the existential risks posed by AI, Mustafa Suleyman, cofounder of DeepMind, believes that the focus should be on more practical issues like regulation, privacy, bias, and online moderation. He is confident that governments can effectively regulate AI by applying successful frameworks from past technologies, although critics argue that current internet regulations are flawed and insufficiently hold big tech companies accountable. Suleyman emphasizes the importance of limiting AI's ability to improve itself and establishing clear boundaries and oversight to ensure enforceable laws. Several governments, including the European Union and China, are already working on AI regulations.

AI adoption is rapidly increasing, but it is crucial for businesses to establish governance and ethical usage policies to prevent potential harm and job loss, while utilizing AI to automate tasks, augment human work, enable change management, make data-driven decisions, prioritize employee training, and establish responsible AI governance.

The U.S. government must establish regulations and enforce standards to ensure the safety and security of artificial intelligence (AI) development, including requiring developers to demonstrate the safety of their systems before deployment, according to Anthony Aguirre, the executive director and secretary of the board at the Future of Life Institute.