Main Topic: Increasing use of AI in manipulative information campaigns online.

Key Points:

1. Mandiant has observed the use of AI-generated content in politically-motivated online influence campaigns since 2019.

2. Generative AI models make it easier to create convincing fake videos, images, text, and code, posing a threat.

3. While the impact of these campaigns has been limited so far, AI's role in digital intrusions is expected to grow in the future.

The main topic is the use of generative AI image models and AI-powered creativity tools.

Key points:

1. The images created using generative AI models are for entertainment and curiosity.

2. The images highlight the biases and stereotypes within AI models and should not be seen as accurate depictions of the human experience.

3. The post promotes AI-powered infinity quizzes and encourages readers to become Community Contributors for BuzzFeed.

### Summary

The rise of generative artificial intelligence (AI) is making it difficult for the public to differentiate between real and fake content, raising concerns about deceptive fake political content in the upcoming 2024 presidential race. However, the Content Authenticity Initiative is working on a digital standard to restore trust in online content.

### Facts

- Generative AI is capable of producing hyper-realistic fake content, including text, images, audio, and video.

- Tools using AI have been used to create deceptive political content, such as images of President Joe Biden in a Republican Party ad and a fabricated voice of former President Donald Trump endorsing Florida Gov. Ron DeSantis' White House bid.

- The Content Authenticity Initiative, a coalition of companies, is developing a digital standard to restore trust in online content.

- Truepic, a company involved in the initiative, uses camera technology to add verified content provenance information to images, helping to verify their authenticity.

- The initiative aims to display "content credentials" that provide information about the history of a piece of content, including how it was captured and edited.

- The hope is for widespread adoption of the standard by creators to differentiate authentic content from manipulated content.

- Adobe is having conversations with social media platforms to implement the new content credentials, but no platforms have joined the initiative yet.

- Experts are concerned that generative AI could further erode trust in information ecosystems and potentially impact democratic processes, highlighting the importance of industry-wide change.

- Regulators and lawmakers are engaging in conversations and discussions about addressing the challenges posed by AI-generated fake content.

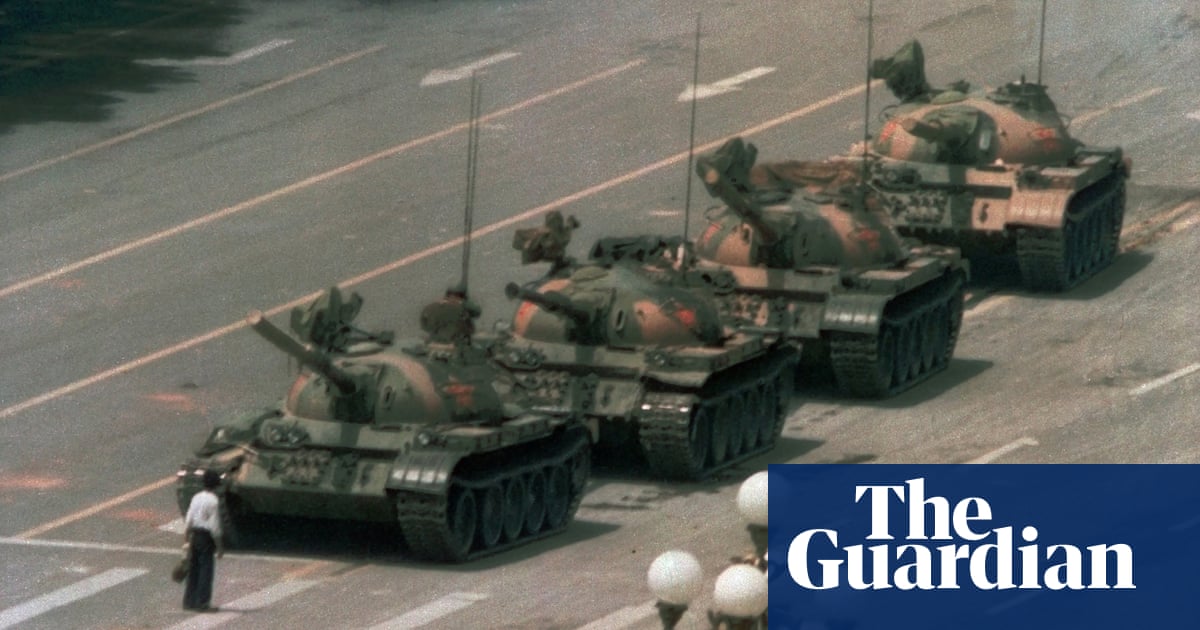

The emergence of artificial intelligence systems that can quickly generate photorealistic images has the potential to distort society's understanding of reality, manipulate historical records, and disrupt the credibility of photography as a witness to events, raising concerns about the future of human creativity, expression, and communication.

AI Algorithms Battle Russian Disinformation Campaigns on Social Media

A mysterious individual known as Nea Paw has developed an AI-powered project called CounterCloud to combat mass-produced AI disinformation. In response to tweets from Russian media outlets and the Chinese embassy that criticized the US, CounterCloud produced tweets, articles, and even journalists and news sites that were entirely generated by AI algorithms. Paw believes that the project highlights the danger of easily accessible generative AI tools being used for state-backed propaganda. While some argue that educating users about manipulative AI-generated content or equipping browsers with AI-detection tools could mitigate the issue, Paw believes that these solutions are not effective or elegant. Disinformation researchers have long warned about the potential of AI language models being used for personalized propaganda campaigns and influencing social media users. Evidence of AI-powered disinformation campaigns has already emerged, with academic researchers uncovering a botnet powered by AI language model ChatGPT. Legitimate political campaigns, such as the Republican National Committee, have also utilized AI-generated content, including fake images. AI-generated text can still be fairly generic, but with human finesse, it becomes highly effective and difficult to detect using automated filters. OpenAI has expressed concern about its technology being utilized to create tailored automated disinformation at a large scale, and while it has updated its policies to restrict political usage, it remains a challenge to block the generation of such material effectively. As AI tools become increasingly accessible, society must become aware of their presence in politics and protect against their misuse.

Deceptive generative AI-based political ads are becoming a growing concern, making it easier to sell lies and increasing the need for news organizations to understand and report on these ads.

Generative AI is being used to create misinformation that is increasingly difficult to distinguish from reality, posing significant threats such as manipulating public opinion, disrupting democratic processes, and eroding trust, with experts advising skepticism, attention to detail, and not sharing potentially AI-generated content to combat this issue.

AI technology is making it easier and cheaper to produce mass-scale propaganda campaigns and disinformation, using generative AI tools to create convincing articles, tweets, and even journalist profiles, raising concerns about the spread of AI-powered fake content and the need for mitigation strategies.

Artificial intelligence will play a significant role in the 2024 elections, making the production of disinformation easier but ultimately having less impact than anticipated, while paranoid nationalism corrupts global politics by scaremongering and abusing power.

AI-generated deepfakes have the potential to manipulate elections, but research suggests that the polarized state of American politics may actually inoculate voters against misinformation regardless of its source.

Google will require verified election advertisers to disclose when their ads have been digitally altered, including through the use of artificial intelligence (AI), in an effort to promote transparency and responsible political advertising.

Google has updated its political advertising policies to require politicians to disclose the use of synthetic or AI-generated images or videos in their ads, aiming to prevent the spread of deepfakes and deceptive content.

Chinese operatives have used AI-generated images to spread disinformation and provoke discussion on divisive political issues in the US as the 2024 election approaches, according to Microsoft analysts, raising concerns about the potential for foreign interference in US elections.

AI on social media platforms, both as a tool for manipulation and for detection, is seen as a potential threat to voter sentiment in the upcoming US presidential elections, with China-affiliated actors leveraging AI-generated visual media to emphasize politically divisive topics, while companies like Accrete AI are employing AI to detect and predict disinformation threats in real-time.

Artificial intelligence (AI) poses a high risk to the integrity of the election process, as evidenced by the use of AI-generated content in politics today, and there is a need for stronger content moderation policies and proactive measures to combat the use of AI in coordinated disinformation campaigns.

China is employing artificial intelligence to manipulate American voters through the dissemination of AI-generated visuals and content, according to a report by Microsoft.

AI-generated images in Copy Magazine reveal the uncanny perfection of fashion photography and serve as a warning to break free from repeating past styles, prompting questions about ethics and copyright in AI image generation.

With the rise of AI-generated "Deep Fakes," there is a clear and present danger of these manipulated videos and photos being used to deceive voters in the upcoming elections, making it crucial to combat this disinformation for the sake of election integrity and national security.

More than half of Americans believe that misinformation spread by artificial intelligence (AI) will impact the outcome of the 2024 presidential election, with supporters of both former President Trump and President Biden expressing concerns about the influence of AI on election results.

Generative AI can be used to create unbiased representations of politicians based on text prompts, generating images that often reflect regional stereotypes.

The Royal Photographic Society conducted a survey among its members, revealing that 95% believe traditional photography is still necessary despite the advancement of AI-generated images, and 81% do not consider images created by AI as "real photography," expressing concerns about stolen content and potential increase in fake news.

AI-generated content is becoming increasingly prevalent in political campaigns and poses a significant threat to democratic processes as it can be used to spread misinformation and disinformation to manipulate voters.

AI-generated deepfakes pose serious challenges for policymakers, as they can be used for political propaganda, incite violence, create conflicts, and undermine democracy, highlighting the need for regulation and control over AI technology.

An AI-generated image of Senator Rand Paul in a bathrobe has gone viral on social media, sparking debate about senators' dress code and highlighting the prevalence of AI-generated fakes and misinformation on platforms like Twitter.

Deepfake images and videos created by AI are becoming increasingly prevalent, posing significant threats to society, democracy, and scientific research as they can spread misinformation and be used for malicious purposes; researchers are developing tools to detect and tag synthetic content, but education, regulation, and responsible behavior by technology companies are also needed to address this growing issue.

Artificial intelligence (AI) has the potential to facilitate deceptive practices such as deepfake videos and misleading ads, posing a threat to American democracy, according to experts who testified before the U.S. Senate Rules Committee.

Foreign actors are increasingly using artificial intelligence, including generative AI and large language models, to produce and distribute disinformation during elections, posing a new and evolving threat to democratic processes worldwide. As elections in various countries are approaching, the effectiveness and impact of AI-produced propaganda remain uncertain, highlighting the need for efforts to detect and combat such disinformation campaigns.

AI-altered images of celebrities are being used to promote products without their consent, raising concerns about the misuse of artificial intelligence and the need for regulations to protect individuals from unauthorized AI-generated content.

China's use of artificial intelligence (AI) to manipulate social media and shape global public opinion poses a growing threat to democracies, as generative AI allows for the creation of more effective and believable content at a lower cost, with implications for the 2024 elections.

AI-generated stickers are causing controversy as users create obscene and offensive images, Microsoft Bing's image generation feature leads to pictures of celebrities and video game characters committing the 9/11 attacks, a person is injured by a Cruise robotaxi, and a new report reveals the weaponization of AI by autocratic governments. On another note, there is a growing concern among artists about their survival in a market where AI replaces them, and an interview highlights how AI is aiding government censorship and fueling disinformation campaigns.

Generative AI tools, including Facebook's AI sticker generator, are being used to create controversial and inappropriate content, such as violent or risqué scenes involving politicians and fictional characters, raising concerns about the misuse of such technology.

The authenticity of a historical photograph featured in the Welcome to Wrexham documentary is being questioned by viewers, with some suggesting it may have been generated by artificial intelligence (AI).

Some AI programs are incorrectly labeling real photographs from the war in Israel and Palestine as fake, highlighting the limitations and inaccuracies of current AI image detection tools.

The image of a charred body shared by the state of Israel was accused of being generated by AI, but experts and the AI detection tool itself have said that the photo is likely real.

Images of Hamas leadership were mistakenly believed to be AI-generated after they were run through an image "upscaler" to increase their resolution, highlighting the problem of misleading technology and the prevalence of fake images.

AI generators like Midjourney, DALL-E 3, and Stable Diffusion are creating a flood of fake images that blur the line between reality and fiction, making it increasingly difficult to distinguish between what's real and what's not.

Photos allegedly showing leaders of Hamas "living luxurious lives" and accused of being AI-generated were actually upscaled using artificial intelligence technology, highlighting the challenges of authenticating real images in an era where AI imaging technology is increasingly prevalent. The low-resolution images, taken almost a decade ago, were run through an upscaler, resulting in distorted faces and hands that led to the false accusation of fabrication.

Artificial intelligence (AI) is increasingly being used to create fake audio and video content for political ads, raising concerns about the potential for misinformation and manipulation in elections. While some states have enacted laws against deepfake content, federal regulations are limited, and there are debates about the balance between regulation and free speech rights. Experts advise viewers to be skeptical of AI-generated content and look for inconsistencies in audio and visual cues to identify fakes. Larger ad firms are generally cautious about engaging in such practices, but anonymous individuals can easily create and disseminate deceptive content.

Misleading campaign ads are becoming more deceptive with the use of AI-generated images, video, and audio to manipulate voter perceptions.

The war between Israel and Hamas has led to an abundance of false or misleading information online, including AI-generated images, making it difficult for fact-checkers to keep up with the disinformation.

Deepfake visuals created by artificial intelligence (AI) are expected to complicate the Israeli-Palestinian conflict, as Hamas and other factions have been known to manipulate images and generate fake news to control the narrative in the Gaza Strip. While AI-generated deepfakes can be difficult to detect, there are still tell-tale signs that set them apart from real images.

Artificial intelligence and deepfakes are posing a significant challenge in the fight against misinformation during times of war, as demonstrated by the Russo-Ukrainian War, where AI-generated videos created confusion and distrust among the public and news media, even if they were eventually debunked. However, there is a need for deepfake literacy in the media and the general public to better discern real from fake content, as public trust in all media from conflicts may be eroded.

Two viral images circulating on social media, one depicting a camp for Israeli refugees in Palestinian territory and another showing Atletico Madrid fans holding a giant Palestinian flag, have been debunked as AI-generated images, according to experts in image analysis.